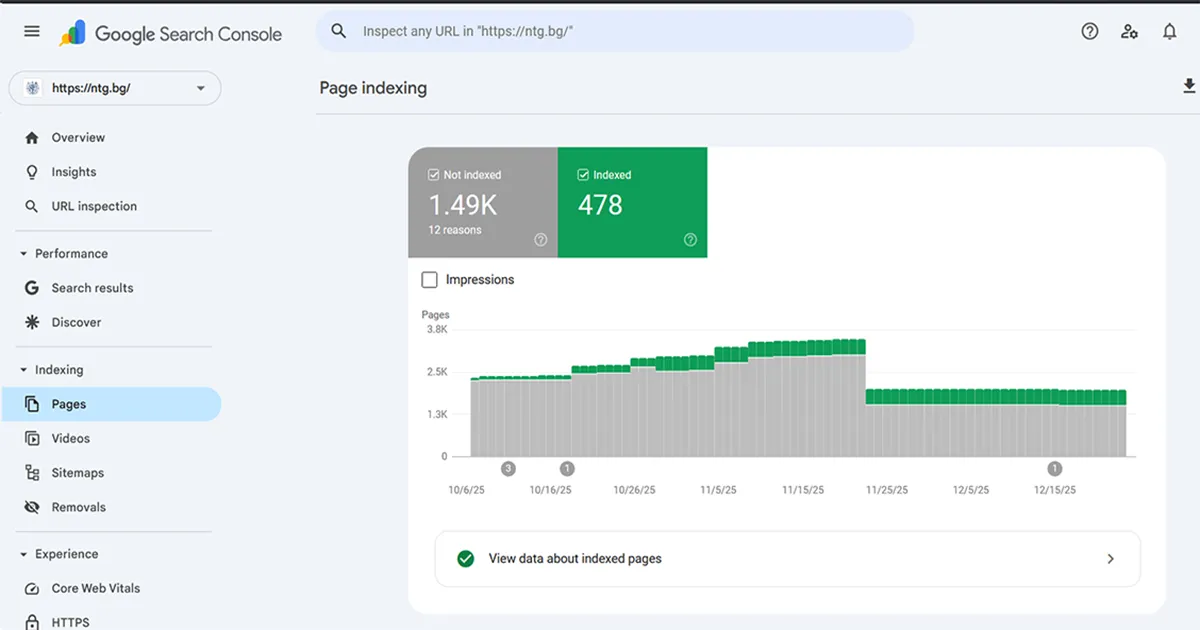

How to stop OpenCart search and parameter URLs from being indexed, reduce “Crawled – not indexed” pages, and free crawl budget for categories and products.

If you see a lot of “Crawled – currently not indexed” pages in Search Console and examples like

index.php?route=product/search&tag=..., the problem is almost always the same.

Google discovers a huge number of dynamic URLs with no SEO value.

Below is the exact method to fix this, without breaking the store and without blocking important pages.

⚠ This is not “ranking magic”. This is SEO hygiene. If Google wastes resources on parameters and search combinations, real categories and products are crawled less often, indexing becomes slower and you get noise instead of SEO results.

With OpenCart, it is very common for URLs to be crawled (or even indexed) that should never exist for Google. These include search pages, tag results, filter parameters, sorting, limits, pagination and endless combinations. The result is a massive number of “pages” that bring no traffic but consume crawl budget.

It means Google has crawled the page, analyzed its content and decided not to index it. By itself, this is not a problem. It becomes a problem when the number grows large, because Google spends time crawling pages that will never bring traffic.

In practice, Google is saying: “I see it, but I don’t need it”. Our goal is to make Google stop crawling it, or receive a clear signal that this page is not meant for search results.

The most common sources of noise are:

index.php?route=product/search&tag=... or &keyword=...?sort=, &order=, &limit=&color=, &price=The goal is not to hide the site from Google. The goal is for Google to see only valuable pages: categories, products, static pages, blog posts, landing pages. Everything that is an internal tool (search, filters, sorting) must be controlled.

We do this on multiple levels. One alone is not enough, the combination matters.

robots.txt does not remove already indexed URLs, but it stops future crawling and reduces noise. A typical OpenCart example that works in 90% of cases:

User-agent: *

Disallow: /admin/

Disallow: /system/

Disallow: /storage/

Disallow: /vendor/

Disallow: /*?route=product/search

Disallow: /*?sort=

Disallow: /*&sort=

Disallow: /*?order=

Disallow: /*&order=

Disallow: /*?limit=

Disallow: /*&limit=

Allow: /

Sitemap: https://YOUR-DOMAIN.com/sitemap.xmlWhen we want the page to function for users but not be indexed, the cleanest solution is noindex, follow for search and result pages, implemented via controller logic or OCMOD.

For category pages with sort, order, limit,

canonical must point to the clean URL without parameters.

UTM, gclid, fbclid and similar parameters should be redirected to the clean URL with 301 to reduce duplication.

The sitemap must contain categories and products only, not search, filters or parameters.

410 Gone is useful only for URLs that should not exist at all and are already indexed, for example mass-indexed search URLs. It should be used selectively, not as a blunt tool.

Email office@ntg.bg or request an SEO consultation.

The rule is simple: Google should crawl value, not noise. That’s how indexation becomes stable and predictable.